Automated discrimination: Facebook uses gross stereotypes to optimize ad delivery

An experiment by AlgorithmWatch shows that online platforms optimize ad delivery in discriminatory ways. Advertisers who use them could be breaking the law.

Online platforms are well known for the refinement of their advertisement engines, which let advertisers address very specific audiences. Facebook lets you target the friends of people who have their birthdays next week, for instance. Until journalists uncovered it, it also let you exclude people of a given race from seeing an ad.

The platforms do not stop there. They perform a second round of targeting, or optimization, without the advertiser’s knowledge.

AlgorithmWatch advertised on Facebook and Google for the following job offers: machine learning developers, truck drivers, hairdressers, child care workers, legal counsels and nurses. All ads used the masculine form of the occupations and showed a picture related to the job. We conducted the experiment in Germany, Poland, France, Spain and Switzerland. The ads led to actual job offers on Indeed, a job portal with a local presence in each of these countries.

For each ad, absolutely no targeting was used, apart from geographical area, which is mandatory.

We did not expect our ads to be shown to men and women in equal proportion. But we did expect our ads to be shown roughly to the same audience. After all, we did not target anyone in particular.

“Optimization”

Google to some extent, but especially Facebook, targeted our ads without asking for our permission. In Germany, our ad for truck drivers was shown on Facebook to 4,864 men but only to 386 women. Our ad for child care workers, which was running at exactly the same time, was shown to 6,456 women but only to 258 men.

Our data is available online.

When advertisers buy space on Google or Facebook, they place bids, as in an auction. When users load their newsfeed or perform a search, the platforms display the ads of the highest bidder for a given user.

It would be unpractical if advertisers competed against all others each time someone refreshes their newsfeed. Platforms “optimize” the bidding process so that advertisers only compete for people who have a larger chance of clicking on a given ad. This way, advertisers are more likely to bid for people who will click on their ads, and platforms can drain their advertising budgets more quickly.

In our experiment, the 4,864 men and 386 women who were shown our ad for truck driver jobs were those Facebook thought had the highest likelihood of clicking on it.

Fateful pictures

We attempted to find out how Facebook computes this likelihood of clicking on ads. In another experiment, we advertised a page listing truck driver jobs, but we changed the images and the text of the ad.

The ads ran in France. In one ad, we used a gendered form for “truck driver” (chauffeur·se). In another, we used only the feminine form (chauffeuse). In a third one, we showed a picture of a country road instead of a truck. Finally, we showed a picture of cosmetics.

The gender split of our ad impressions shows that Facebook relied mostly on the images to decide whom to show the ad to.

Google might discriminate less, but it’s unclear

The ads we bought on Google exhibited similar patterns, but they were much more limited (the difference between the ad most shown to women and the ad most shown to men was never larger than 20 percentage points). More importantly, the gender optimization on Google did not follow a consistent pattern. In Germany, for instance, the ad for truck drivers was the one most shown to women.

However, Google did not let us place “unlimited” bids on our ads. We had to manually set how much we were ready to pay for each person who clicked on the ad. With unlimited bids, the platform automatically decides how much to offer for a click, based on the advertiser’s budget. The results from the two platforms are not directly comparable.

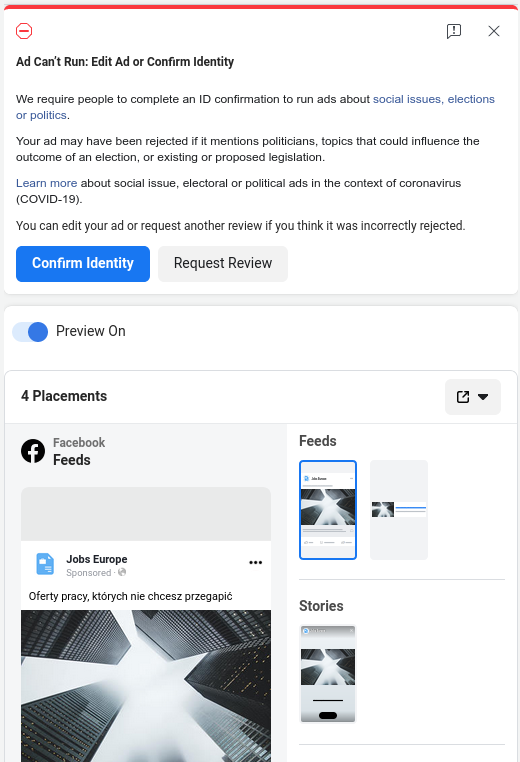

Our Facebook experiment also suffered from the platform’s arbitrary enforcement of its policies. In Spain, all our ads were suspended after a few hours under the pretense that they were a “get rich quick scheme”. In Poland, two of our ads were suspended after Facebook decided that they were about “social issues, elections or politics”.

Replicating the past

Our findings confirm those by a team from Northeastern University in the United States. In 2019, they showed that Facebook’s ad optimization discriminated in possibly illegal ways.

Piotr Sapiezynski, one of the co-authors, told AlgorithmWatch that Facebook was certainly learning from past data. He noticed that the gender distribution of ads did not change much over the lifetime of an ad. Instead, Facebook immediately decides whom to show the ad to, as soon as the ad is published. It is likely that Facebook makes predictions on who might click on an ad based on how users reacted to similar ads in the past.

“The mechanism is inherently conservative”, Mr Sapiezynski said. “It predicts that the past will repeat”. Because it decides what users see when they visit Facebook properties, “it also creates the future”, he added. And in doing so, it deprives people of opportunities.

Possibly illegal

Automated targeting could help advertisers in many ways. Job offers for licensed professions, for instance, could only be shown to people holding the required degrees. However, European law forbids discrimination based on a series on criteria, such as race and gender.

It is easy to see why. If a job offer for a position of nurse or childcare worker were only advertised on Facebook, male candidates would have little chance of ever applying.

AlgorithmWatch talked to several experts in anti-discrimination legislation. They agreed that Facebook’s, and possibly Google’s, automated targeting constituted indirect discrimination.

The ethical perspective

Notwithstanding legal implications, these systems contribute to discrimination in an ethical sense, Tobias Matzner, a professor of Media, Algorithms and Society at Paderborn University, told AlgorithmWatch.

“Such processes clearly contribute to discrimination, especially structural forms of discrimination where certain parts of the populace are kept from certain social areas,” he said. “This example shows how our entire Facebook or Google usage history could one day determine which job we get. It would not come by by a negative verdict by a powerful company, but because the data in our history and the data in the platforms' predictors combine in a way that we don't see the job offer in the first place.”

Furthermore, the 2019 experiment at Northeastern University showed that Facebook also discriminated on race. We were not able to verify that this was also the case in Europe, but Mr Sapiezynski saw no reason why Facebook would not use the same mechanism everywhere.

Hard to prove

Although forbidden, this discrimination might be impossible to prove for citizens who were affected, such as a female truck driver looking for a new position but to whom Facebook does not show any offers.

Current legislation puts the burden of proof on complainants. However, Facebook users have no way of knowing what job offers they were not shown, and advertisers have no way of ensuring that their ads are shown in a way that does not discriminate illegally. The German anti-discrimination authority called for an update in the legislation that would solve this conundrum, but change has yet to appear on the legislative horizon.

In the United States, housing rights activist Neuhtah Opiotennione attempted to file a class action lawsuit against Facebook’s ad delivery discrimination, but the California judge handling the matter is unlikely to let it go forward.

No-go zone

Google did not respond to multiple requests for comment. They did, however, suspend our advertiser account a few days after we sent our questions, arguing that we “circumvented Google's advertising systems and processes”, which we did not.

Facebook did not answer our questions, but, in what might have been an attempt at intimidation, asked to see all our findings and asked for the names of the journalists AlgorithmWatch was working with.

Moritz Zajonz contributed to this story.

This investigation received support from JournalismFund.eu

Did you like this story?

Every two weeks, our newsletter Automated Society delves into the unreported ways automated systems affect society and the world around you. Subscribe now to receive the next issue in your inbox!