Nicolas Kayser-Bril

Reporter

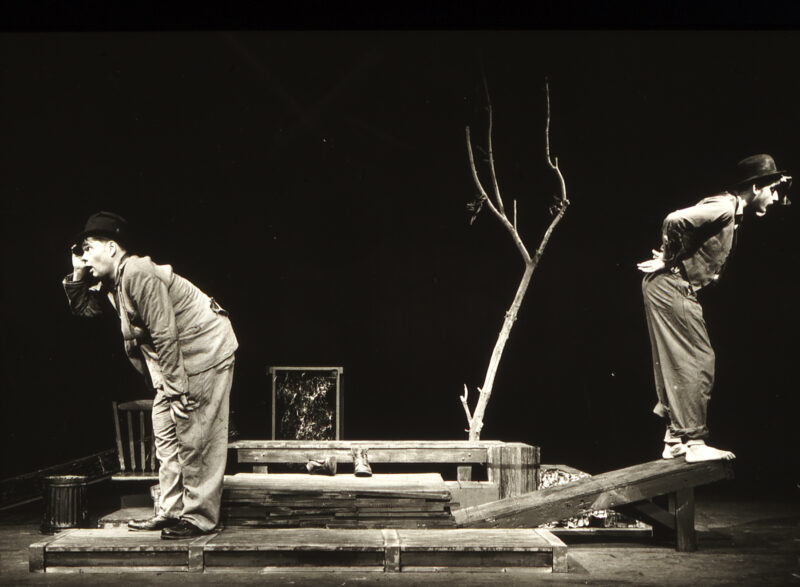

Photo: Julia Bornkessel, CC BY 4.0

French, English, German

kayser-bril@

PGP Public Key

https://social.nkb.fr/@

Nicolas is a French-German journalist. He pioneered data-driven journalism in Europe, regularly speaks at international conferences, and taught journalism at several journalism schools in France, Switzerland and Russia. As a self-educated developer, he created interactive, data-driven applications for Le Monde. He built the data journalism team at OWNI and co-founded and managed Journalism++ from 2011 to 2017. Nicolas was one of the main authors of the Datajournalism Handbook.

Articles for AlgoritmWatch

How not to: We failed at analyzing public discourse on AI with ChatGPT

The year we waited for action: 2023 in review

Some image generators produce more problematic stereotypes than others, but all fail at diversity

AlgorithmWatch welcomes first fellows in algorithmic accountability reporting

The year automated systems might have been regulated: 2022 in review

Wolt: Couriers’ feelings don’t always match the transparency report

Algorithmic elections: How automated systems quietly disenfranchise voters

Mastodon could make the public sphere less toxic, but not for all

The fediverse is growing, but power imbalances might stay

How researchers are upping their game to audit recommender systems

AlgorithmWatch is offering 5 fellowships in algorithmic accountability reporting

Meta is sued for abetting fraud, and they don’t want you to know about it

Face recognition data set of trans people still available online years after it was supposedly taken down

A European newsroom to investigate automated systems

The year that was not saved by automated systems – 2021 in review

Subscribe to the Automated Society newsletter

European Council and Commission in agreement to narrow the scope of the AI Act

Facebook goes after the creator of InstaPy, a tool that automates Instagram likes

National parks near Marseilles deploy automated, live video surveillance against poachers

An English police force created its own ethics committee and it’s totally not ethics washing, they say.

YouTube cleaned its ‘news’ section… with content from Axel Springer

Instagram algorithm: Süddeutsche publishes results of data analysis

LinkedIn automatically rates “out-of-country” candidates as “not fit” in job applications