Watching the watchers: Epstein and Robertson’s „Search Engine Manipulation Effect“

By Katharina A. Zweig

1. Watching the watchers: Epstein and Robertson’s „Search Engine Manipulation Effect“

In July, 2015, Epstein and Robertson published a widely acknowledged study on how much a biased search engine result ranking can shift undecided voters towards one candidate [1]. In their abstract, they summarize that “biased search rankings can shift the voting preferences of undecided voters by 20% or more” [1]. In various articles and interviews, Epstein refers to his study and states that “as many as 80% of voters in some demographic groups” can be shifted (e.g. here, in an exclusive article by Epstein himself for Sputnik News [2]). Many established newspapers and magazines quote the authors’ results, e.g., Sandro Gaycken in an article for the Süddeutsche Zeitung: “Epstein was able to proof that targeted manipulation of the search results for queries with a political topic results in a shift of opinion towards the manipulator. A very effective kind of manipulations, as it seems: more than a quarter of the users changed their opinion.” (“Epstein konnte nachweisen, dass die gezielte Manipulation der Ergebnisse von Websuchen zu politischen Themen in Suchmaschinen eine Meinungsänderung zugunsten des Manipulators bewirkt. Eine sehr effektive Art der Beeinflussung, wie es scheint: Mehr als ein Viertel der Suchenden änderten ihre Haltung.”) [3].

In this article, I will show that the results are strongly exaggerated because they are based on a measure which sums up the effects of biasing the results for all candidates instead of averaging them or stating the range of possible effects. In the most realistic of the three studies conducted by Epstein and Robertson, the single effects are within the range of 2-4%. This is still an important effect, especially for elections which are as close as the ones often seen in the USA but less worrying for European countries with multiple parties.

1.1 Introduction to Epstein and Robertson’s paper

The general structure of the studies of Epstein and Robertson is the following: there are either two or three candidates to vote for. For each candidate, there is a set of possible search engine results, i.e., texts about the candidates. These texts were ranked by independent reviewers on being either in favor of the candidate or rather against the candidate. Based on the rankings, lists with different orders of these texts were created that were either neutral or biased towards one of the candidates as described in the following.

1.1.2 Creating the artificial search result lists

To make a biased list, one of the candidates is chosen and the results favoring this candidate are put up front the list and the ones of the other candidate(s) are ranked in reverse order. In the middle of the list, there will be the most unfavorable texts of both, the favored candidate and then those of the non-favored candidate. At the end of the list the texts favoring the unwanted candidate are shown. It is not totally clear how the list was built for the study with three candidates, but in each case, the lists start with the most favorable texts about the favred candidate.

In the studies with only two candidates, there was additionally a carefully constructed list in which positive results of the candidates were presented in turns. This list is used as a ‘control’ list which is not favoring any of the two candidates.

1.1.2 The study setting

In all three study settings, the participants were asked – after a short introduction of the candidates – whom they would vote for. Then, they were presented with a biased or unbiased search result ranking and asked again, for whom they would vote (pre-bias and post-bias vote). The artificial search result list was presented in a search engine-like environment, where it is possible to go through the different result pages and to follow the links to the real webpages.

In the first study, some US American participants were asked to make a decision between two Australian politicians, both of which where not really known well by the participants (as shown in the paper).

The second study was conducted online in the USA, with respect to the same two Australian politicians. The set of participants was much larger, but again, they did not know the politicians very well before the experiment.

The third study took place with Indian participants, right before a real election with three different candidates.

2. Discussion of the results and their interpretation

The first study asked for the participants’ preferences for one of two candidates. There were three different experiments in this study, with three groups each. To illustrate the kind of analysis, the first experiment and its results are discussed in detail: Asked to vote for one of the candidates before getting more information, resulted in 8, 11 and 17 votes for candidate A, respectively. The other participants voted for candidate B, i.e., 26, 23, and 17 persons. The first group saw the ranking biased in favor of candidate A, the second one the ranking biased in favor of candidate B, the third one the unbiased ranking. Note that – in total – all participants saw in principle the same 30 documents, but in different order. The number of votes for candidate A after getting more information were (in that order): 22, 10, and 14, leaving 12, 24 and 20 for candidate B. Thus, candidate A won 14 new votes in the first experiment which favored her, lost one in the second which favored the other candidate, and lost three in the third, which is the control group .

What is the best measure to quantify the shift towards candidate A in the first experiment? On the one hand one could say, it is 14 out of 34 participants – since all were “undecided”, it might make sense to divide by the group size. However, based on the fact that people who already voted for candidate A before seeing the list biased in favor of him might not change their mind, it might be more precise to say that it is 14 out of those 26 participants who voted for candidate B beforehand. Both measures seem to be sensible.

Epstein and Robertson repeated this first experiment twice, and thus there were in total 9 groups of 34 participants of which 3 where biased in favor of candidate A. With respect to that, the average of the percentage of voters of candidate B who could be shifted towards A was:

[14/26 + 11/18 + 5/17]/3 = [0.54 + 0.64 + 0.29]/3 = 1.47/3=0.49

In words: over the three first experiments, there were in total 61 persons that voted for B before seeing the search results, and of those 30 switched to candidate A after seeing the search results favoring A.

The same value for candidate B would be less impressive:

[1/11 + 8/20 + 6/21]/3 = [0.09 + 0.4 + 0.29]/3 = 0.78/3 = 0.26

In words: of the 54 voting for candidate A before seeing the search results, only 15 changed their mind after seeing the results favoring candidate B. Thus, the average percentage of undecided voters leaning to one side that can be shifted is strongly dependent on the candidate. Mind that even in the control groups, there is some shift towards candidate A which might point to some dominance of candidate A. The results of the control groups were not regarded by Epstein and Robertson in the rest of the analysis, although they might have given some baseline in favor of one of the candidates. In any case: letting people vote for a case they do not care for, it is less astonishing that any kind of information will influence their decision. I also would not know which kind of question would result in one list with links to two or more candidates. Nonetheless, it is interesting that some percentage of the same group of basically “undecided voters” can be shifted to either candidate, based on a biased ranking of the “search results”.

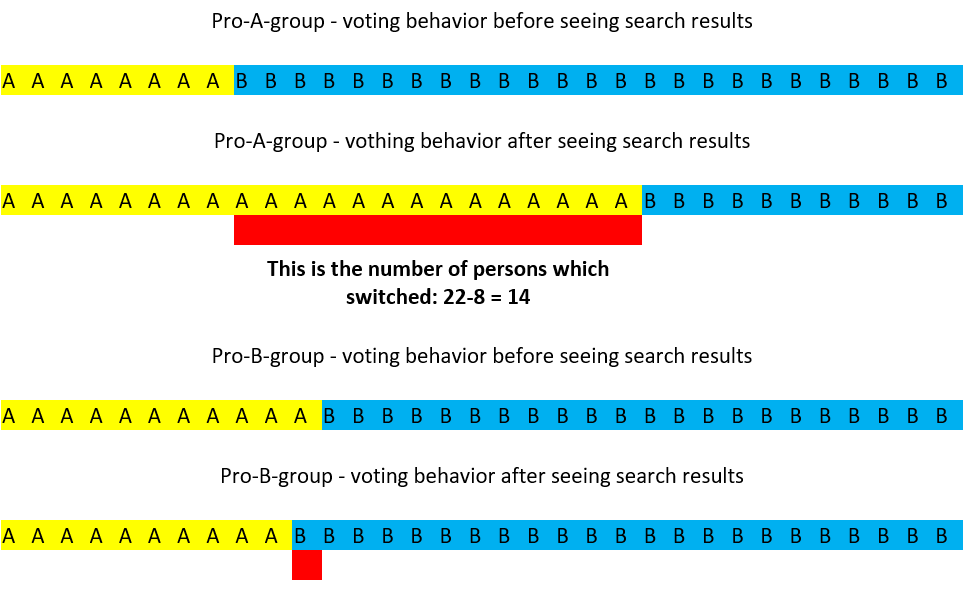

However, while the proposed measure does make some sense and while the percentage of persons that can be shifted is impressive, Epstein and Robertson introduce a different measure to quantify the effect. They call it the vote manipulation power (VPM). It combines and adds (!) the shifts of the two (or three) different candidates. It works like this: first, sum up the number of pre-voters in favor of the candidate towards whom a bias shall be implemented. Remember that there is one group of 34 people that get the search results favoring A and another group of 34 people that get the search results favoring B. See Fig. 1 for a visualization of the voting results before and after reading the artificial search results.

Now, the number of persons already in favor of the favored candidate are added: In the first experiment, in the pro-A group, there were 8 voters in favor of candidate A before they saw the ranking of the links. In the pro-B group, there were already 23 voters in favor of that candidate. Let the sum of this be denoted by x, with x=31. Note that – if the groups of participants are large enough and of equal size – this sum will expectedly be the group size of 34, with some variance. This value x is taken as the basis for a percentage. The numerator is defined as the combined difference in the absolute number of post- and pre-votes with respect to all candidates. Thus, the VMP of the first experiment is

22-8 [new voters for candidate A after the participants saw the manipulated search results]

+ 24-23 [new voters for candidate B after the participants saw the manipulated search results].

It can be seen that the values that are subtracted are the absolute number of voters of the two candidates in “their” bias group, i.e., in sum we subtract x. The sum of the new absolute number of voters is defined as x’. Thus, the difference between the total voters of the candidate favoured in the two groups and the ones that were already convinced of them beforehand is x’-x = 15. It is the combined, absolute number of persons that were persuaded to change their opinion.

The VMP is now defined as (x’-x)/x, i.e., 15/31 =0.48. In the article by Epstein and Robertson, this is (first) correctly verbalized as the “percent increase in subjects in the bias groups combined who said that they would vote for the favored candidate.” (Caption of Table 2, [1]). In their conclusion, however, Epstein and Robertson change the interpretation of the VMP by stating: “The power of SEME to affect elections in a two-person race can be roughly estimated by making a small number of fairly conservative assumptions. Where i is the proportion of voters with Internet access, u is the proportion of those voters who are undecided, and VMP, as noted above, is the proportion of those undecided voters who can be swayed by SEME, W – the maximum win margin controllable by SEME – can be estimated by the following formula: W = i*u*VMP.” [1]. In the light of the above argument, this is not true, because the VMP combines the effects of both manipulations. But any reasonable manipulation will only favor one of the two candidates and not both. Thus, it does not make sense to sum the effects up, in neither the first nor the second study, which both focused on the choice between two Australian politicians that nobody really knew.

Looking at the larger groups of study 2 (online participants, same setting), it can be seen that 25% of the total group A ,which favored candidate A, were shifted towards her, 13% were shifted towards candidate B in the group favoring him – but also 6% shifted to candidate A in the control group. The shifts with respect to the voters favoring the non-favored candidate in the pre-bias vote are 45%, 28%, and 12%, respectively. The control group indicates that candidate A is objectively a better candidate and that the pre-bias vote fractions are mainly determined by the lacking knowledge about the candidates.

While the VMP is already unintuitive for two candidates, it becomes downright uninterpretable for three candidates. Epstein and Robertson conducted a third study in India, where people did know their three candidates and cared for the result of the vote. Not surprisingly, the effects of shifts were not very large anymore. Again, participants were split into groups. There were three groups of which the first was biased towards candidate A, the second towards candidate B, and the third towards candidate C (and no control group). The group sizes differed: n1 = 709, n2 = 688, n3 = 753.

Computing again the sum of the voters preferring candidate A in the first group, candidate B in the second group, and candidate C in the third group (each before reading the biased ranking) results in x = 115 + 183 + 430 = 728 (i.e., about the average group size). The difference between the new sum of voters minus the pre-bias voters only 77 out of these 728. Thus, the VMP is 10.6%.

In contrast to the former studies, the group of participants cannot be seen as a group of undecided voters but it might contain some. These undecided voters could now be totally undecided or just oscillate between two of the three candidates. Thus, summing up the differences in pre- and post-votes might double-count some of the voters, namely those that were indifferent about all three candidates. Mathematically, the sum now only gives an upper bound of the absolute number of people that can be shifted combined over all candidates.

With this in mind, it can be shown that the VMP can be larger than 100%, as demonstrated by the following (hypothetical) data. Let’s pretend that all three groups consisted of 600 participants, and that in the pre-bias poll, all three candidates get 200 votes in all three groups. In each of the bias groups, the favored candidate receives 420 votes and the other two candidates get 90 votes each. Thus, the VMP is 3*(420-200)/600 =660/600 = 110%. So, the total vote manipulation power is more than 100%, showing that the measure is effectively very difficult to interpret.

Because of the potential double counting of people that sway between all three candidates, adding the differences the VMP is just an upper bound on the fraction of people that could be manipulated. Furthermore, dividing by the total group size does not make sense anymore, because the voters are not (all) undecided voters. Thus, for study 3, the VMP does not make the result credible and it cannot be said that “biased search rankings can shift the voting preferences of undecided voters by [the VMP]” [1].

I do agree with Epstein and Robertson that there is an effect on whom the participants vote for, albeit a much smaller one than the cited 20% or 80%. What would be more reasonable ways of quantifying the effect? One can either compute the average shift towards the respective, favored candidate as a percentage of the total group size. In the third study, this would be [29/709 + 16/688 + 32/753]/3 = [0.04+0.02+0.04]/3=0.03, i.e., 3%. Or it can again be argued, as done above in the two-candidate-scenario, that votes can only be won from those that did not already vote for the favored candidate. This increases the percentage to [29/594 + 16/505 + 32/323]/3 = [0.05+0.03+0.1]/3=0.06, i.e., 6%. This can now verbally be interpreted as: if society does not know to which side a search engine leans, its expected shift in the percentage of all voters towards one candidate is 3% (first version). If society does not know to which side a search engine leans, the candidate can expect 6% of the other candidates’ voters to shift in his (her) favor. However, in my view, the clearest interpretation would be: the percentage of all voters who can be shifted is between 2 and 4%, depending on the candidate. The percentage of those voters who first said to favor other candidates and who can be manipulated is between 3% and 10%. How many undecided voters there are in the three candidate version cannot be said. Only upper and lower bounds can be given.

Anyway, even if the VMP were a reasonable measure, where does the often cited 20% and where does the 80% result come from? Both stem from the study in India, but actually, the VMP is only 10.6% as discussed above and as explicitly stated in the article [1, Table 5]. Epstein and Robertson discuss this result and add that some persons reacted paradoxically and did not finally vote for the “favored” candidate although they voted for him before reading the artificial search result list. Epstein and Robertson call this an “underdog effect” and foresee that a search engine would be able to distinguish so well between users as to not show the biased search ranking to those voters. By eliminating those voters who pre-voted for the favored candidate and did not do so in the post-vote, they come to a VMP of 19.8% (the 20% in their summary).

The 80% come from a multiple testing: since the authors had various extra information on their participants (at least the highest level of education, gender, political leaning in general, age, marital status, employment status), they also looked for the VMP in subgroups, openly stating that “the groups we examined are somewhat arbitrary, overlapping, and by no means definitive, they do establish a range of vulnerability to SEME.” [Supporting Information to the main article by Epstein and Robertson, page 1]. While this procedure does “establish a range of vulnerability”, it is akin to throwing a fair dice long enough to find eight sixes in a sequence of ten throws. Such a procedure is called ‘multiple testing’ and statistically a mistake. Since their samples are not extremely large, it would be possible to just by chance find a group with a VMP of 80% while the real VMP would be much smaller. However, one of the groups they found (of which we do not know the size) has a VMP of 80%, and this is where this result comes from.

3. Summary

Based on Epstein and Robertson’s paper, it can be stated that the order in which information about two or three political candidates is presented can influence a voter’s choice. It influences those more who are not really involved in any current poll (study 1 and 2). These might be called ‘undecided voters’, but they might also be those who would not actually go to a poll. In this first scenario, the average percentage of voters who can be converted from those of the other candidate can be around 50%. This seems to be largely an effect of the overall missing information of voters about candidates in this scenario in the pre-bias vote. The control group might indicate that candidate A is objectively better than candidate B – however, the result of the control group was not used as a base line.

In the third study, where Epstein and Robertson asked much more clearly decided voters, the overall effect is much smaller. The average conversion rate of voters of the other candidates is more likely to be 6%, i.e., 3% of all voters. However, the variance is large and depends on the personality of the candidate. Thus, there is some evidence for a potential SEME, but the numbers are not nearly as large as currently quoted by Epstein in various political discussions.

Reference

[1] Robert Epstein and Ronald E. Robertson: “The Search Engine Manipulation Effect (SEME) and its possible impact on the outcome of elections”, PNAS 112(33), E 4512-4521, 2015

[2] Robert Epstein: „SPUTNIK EXCLUSIVE: Research Proves Google Manipulates Millions to Favor Clinton”, 12th of September 2016, Sputnik News, https://sputniknews.com/us/201609121045214398-google-clinton-manipulation-election/

[3] Sandro Gaycken: “Die neue Macht der Manipulation”, Süddeutsche Zeitung, 20th of September, 2016. http://www.sueddeutsche.de/politik/aussenansicht-die-neue-macht-der-manipulation-1.3170439